Dear AuntMinnie Member,

Well, the day has finally arrived -- the first day of virtual RSNA 2020. With two bangs of a gavel this morning, RSNA 2020 President Dr. James Borgstede opened this year's conference in a video presentation to virtual conference attendees.

In his opening address, Borgstede pointed out that radiologists are no strangers to technology of the type being used to deliver the virtual meeting. He spoke of the role that technology -- and radiologists -- play in flattening the world, driving globalization and creating equal opportunities.

Many of these technologies are on display at RSNA 2020, the most obvious of which is artificial intelligence (AI) and deep learning. But technology for technology's sake shouldn't be radiology's ultimate goal -- imaging specialists should instead endeavor to use technology to become more human in the practice of medicine.

"There is no substitute for human-to-human interaction in radiology," Borgstede said, echoing a thought that many no doubt are having this week.

Our coverage of RSNA 2020 can be found in our RADCast @ RSNA. Although the meeting is just a day old, you'll already find a wealth of articles and videos on the proceedings.

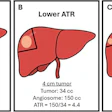

The biggest story of the year has been COVID-19 (in fact, that's why we're all at home this week instead of in Chicago). And perhaps the go-to imaging modality for the diagnosis of COVID-19 patients has been CT. In a scientific session on Sunday afternoon, researchers from multiple groups discussed how they have been using CT to manage patients, with at least two groups reporting on their use of AI.

You can also learn about an artificial intelligence (AI) algorithm developed by researchers from South Korea to detect and quantify brain infarcts on diffusion-weighted MRI (DWI-MRI) scans.

On the video side, we're pleased to feature interviews with radiology thought leaders, including Dr. Ruth Carlos, who discussed her perspective on how radiologists can reintegrate themselves into patient care, and Elizabeth Krupinski, PhD, who offered her view on how radiology's conversion to digital imaging has contributed to radiologist burnout. Also learn about a new portable CT scanner that is winning plaudits.

We'll be featuring coverage from virtual RSNA 2020 for the rest of the week, so be sure to return to rsna.auntminnie.com early and often. And for even more rapid updates, be sure to follow us on Twitter.

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnie.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=100&q=70&w=100)

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnie.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=112&q=70&w=112)