An AI model developed by Stanford researchers shows promise for reading CT scans like a radiologist would, according to a study published March 4 in Nature.

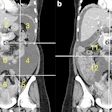

The model, called Merlin, is a 3D vision-language model (VLM) designed specifically for abdominal CT interpretation, and it works in three dimensions to analyze full CT volumes, wrote a team led by Louis Blankemeier, PhD, of Stanford.

"[We found that] Merlin maintained consistent and strong performance when evaluated on over 44,000 external CT scans spanning multiple sites and anatomies," Blankemeier and colleagues noted.

Each year, around the world, an estimated 300 million CT scans are performed, and a quarter of these are abdominal exams. These scans can consist of more than 300 slices, leading to time-consuming interpretation. Add to this a lack of a "commensurate increase in the number of radiologists, there is an extensive interpretation burden on the field of radiology," the investigators explained. Machine learning could help mitigate radiologist shortages.

Blankemeier's group trained Merlin with a clinical dataset comprising more than six million CT images, nearly two million diagnosis codes from electronic health records, and more than six million "tokens" of radiology report text. They evaluated its performance across 752 individual tasks spanning the following six categories:

- Zero-shot classification of imaging findings

- Phenotype prediction from ICD codes

- Cross-modal retrieval

- Five-year disease risk prediction

- Radiology report generation

- 3D organ segmentation across 20 structures

The team reported that, particularly for the zero-shot classification task -- where the model identifies findings without any task-specific training -- Merlin achieved an F1 score of 0.741 on internal data, outperforming existing 2D models (including OpenCLIP and BiomedCLIP). The researchers also found that Merlin could predict whether a currently healthy patient would develop one of six chronic conditions -- including diabetes, hypertension, and cardiovascular disease -- within five years, with an average area under the receiver operating curve (AUROC) of 0.757.

Blankemeier and colleagues did acknowledge that single-GPU training (the group used NVIDIA A6000 GPU) could limit the model's performance, that report generation tended to underreport positive findings, and that "further work is needed to optimize segmentation."

But the model shows promise, according to the authors.

"Merlin lays the groundwork for training anatomy-specific and modality-specific radiology foundation models," they concluded. "Future work can mimic our benchmarking strategy and explore the relative benefits of pretraining on multiple anatomies for the same modality, multiple modalities for the same anatomy, or both."

Merlin is publicly available through the following platforms: GitHub, HuggingFace, and PyPI.

Access the full study here.