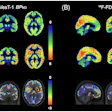

ChatGPT appears to have had some training in nuclear medicine, with the latest versions of the model correctly identifying the primary diagnosis in up to 89% of F-18 FDG-PET/CT brain scans, according to a recent study.

A team at the University of Cologne in Germany found that ChatGPT-4o and ChatGPT-5 achieved high median diagnostic agreement scores against expert nuclear medicine physicians across 100 neurodegenerative disease cases, without access to imaging data or task-specific training.

“While ChatGPT is not approved for clinical diagnostics, it demonstrated substantial diagnostic alignment with expert physicians when interpreting textual case information,” wrote lead author Arndt-Hendrik Schievelkamp, MD, and colleagues. The study was published April 21 in the European Journal of Radiology Artificial Intelligence.

Large language models (LLMs) such as ChatGPT have gained significant attention for their potential to assist in the interpretation of medical data, with early models showing potential for diagnosing nuclear medicine cases. Yet few studies have explored their specific potential for interpreting cerebral F-18 FDG-PET/CT scans, which play a central role in the differential diagnosis of neurodegenerative diseases, the authors noted.

To that end, the group fed ChatGPT-4o and ChatGPT-5 the textual descriptions of imaging findings, as well as clinical history and patient age, from 100 F-18 FDG-PET/CT reports from patients evaluated for suspected neurodegenerative disease. Both models were queried via a web interface using a standardized prompt, without fine-tuning. Model output was compared against descriptions by expert nuclear medicine physicians who interpreted the imaging studies in routine practice, with performance scored on a five-level scale ranging from 0 (no agreement) to 1.0 (complete agreement).

According to the results, median agreement scores were 1.00 for ChatGPT-4o and 1.00 for ChatGPT-5. ChatGPT-4o correctly identified the main diagnosis in 86% of cases, with 52% scoring 1.00 and 12% scoring 0.00. ChatGPT-5 performed slightly better, with a correct main diagnosis rate of 89%, with 60% of cases scoring 1.00 and only 8% scoring 0.00.

The authors noted that both models excelled on cases with well-defined metabolic patterns, such as in typical cases of Alzheimer's disease, yet were less accurate on complex cases involving atypical or overlapping findings. In addition, in a 20-case reproducibility subset, exact run-to-run agreement was 75% for ChatGPT-4o and 55% for ChatGPT-5.

“These findings suggest that AI can complement physician expertise by potentially improving diagnostic efficiency and consistency,” the group wrote.

The authors noted that ChatGPT was not specifically trained or fine-tuned for the study and that the models operated solely based on their pre-existing general knowledge and capabilities. Thus, it stands to reason that appropriate training could quickly lead to even better and more consistent results, they suggested.

Future approaches may combine automated image-analysis systems capable of identifying characteristic metabolic patterns on F-18 FDG-PET scans with LLMs that interpret the structured textual descriptions of the findings, according to the authors.

"When reviewed by an expert physician, AI-generated assessments may support clinical workflows while preventing potential AI-driven misinterpretation of PET-imaging findings," the group concluded.

The full study is available here.