Deep learning could improve echocardiography for detecting major cardiac conditions, suggest findings published March 17 in Nature Cardiovascular Research.

A team led by Geoffrey Tison, MD, from University of California, San Francisco (UCSF), developed a deep neural network (DNN) architecture that simultaneously combines information from multiple video views. The DNN improved differentiation of imaging features on echo exams, the team found.

“This demonstrates that AI models that can combine information from multiple imaging views simultaneously can better capture complex anatomy and physiology for certain tasks, underscoring the value of a multiview paradigm for AI in medical imaging,” Tison and colleagues wrote.

DNNs trained using image or video data can detect various diseases by allowing for analysis of an image’s raw pixels and raw video data over time across sequential images. However, DNNs are not well suited to integrate multiple 2D imaging views at the same time as physicians do to accurately analyze 3D structures.

“In cardiac echo, for example, reliable diagnosis most often depends upon the corroboration of information contained across multiple echo views,” the researchers wrote.

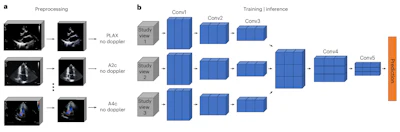

Tison et al developed their purpose-built multiview DNN architecture designed to integrate 3D video information from multiple complementary 2D imaging views. This mirrors how a physician interprets 3D anatomic structures. The DNN architecture accepts inputs from multiple views while also integrating information between these views through dedicated DNN layers. It uses a midfusion approach to combine features from each input view at an intermediate stage.

Multiview neural network architecture and data preprocessing for the demonstration imaging modality of cardiac echo. (a) All videos from a single echo study undergo standardized preprocessing (masking to exclude nonmoving pixels and pixels outside the ultrasound region, cropping to ultrasound image region and resizing to 224 × 224 pixels). Each echo video’s view and presence of color-doppler signal are detected using trained view-classification and doppler-detection DNNs. (b) One echo video from each predefined echo view (A4c, A2c, and PLAX) accepted by the multiview DNN is selected from the same echo study; these three echo videos are used as simultaneous inputs into the multiview DNN classifier to predict the target task. Embeddings from each video are passed through individual convolutional encoder blocks (Conv1 to Conv3) before they are concatenated along a new dimension and then passed through two more convolutional blocks (Conv4 and Conv5) that perform cross-view convolutions to integrate spatiotemporal information between views before the final prediction is made. Images are republished under a Creative Commons Attribution 4.0 International License.

Multiview neural network architecture and data preprocessing for the demonstration imaging modality of cardiac echo. (a) All videos from a single echo study undergo standardized preprocessing (masking to exclude nonmoving pixels and pixels outside the ultrasound region, cropping to ultrasound image region and resizing to 224 × 224 pixels). Each echo video’s view and presence of color-doppler signal are detected using trained view-classification and doppler-detection DNNs. (b) One echo video from each predefined echo view (A4c, A2c, and PLAX) accepted by the multiview DNN is selected from the same echo study; these three echo videos are used as simultaneous inputs into the multiview DNN classifier to predict the target task. Embeddings from each video are passed through individual convolutional encoder blocks (Conv1 to Conv3) before they are concatenated along a new dimension and then passed through two more convolutional blocks (Conv4 and Conv5) that perform cross-view convolutions to integrate spatiotemporal information between views before the final prediction is made. Images are republished under a Creative Commons Attribution 4.0 International License.

The researchers used echocardiogram data from UCSF and the Montreal Heart Institute and applied their DNN approach for three primary tasks: detecting any left or right ventricular abnormality, diastolic dysfunction, and substantial valvular regurgitation.

Across various tasks, the multiview DNNs improved discrimination (measured by area under the receiver operating characteristic curve [AUC]) by 0.06 to 0.09 compared with DNNs trained on any single view.

In the held-out UCSF test datasets for all three tasks, the multiview DNN achieved high marks for AUC, sensitivity, and specificity.

Performance of multiview DNN on echo findings | |||

Measure | LV/RV abnormality | Diastolic dysfunction | Valve regurgitation |

AUC | 0.91 | 0.84 | 0.9 |

Sensitivity | 81% | 74% | 83% |

Specificity | 84% | 78% | 83% |

On the external validation dataset (Montreal Heart Institute), the multiview DNN achieved the following AUC values for each task: LV/RV abnormality, 0.91; valve regurgitation, 0.92; and diastolic dysfunction, 0.79.

The researchers noted that the AUCs for LV/RV abnormality and valve regurgitation were comparable to DNN performance in the UCSF test dataset. And the diastolic dysfunction finding showed “reasonable generalization with modest performance degradation” compared with the UCSF test dataset.

“If confirmed by future work applying multiview DNNs to other imaging modalities and diseases and in multi-institutional settings, the multiview approach provides a powerful paradigm to train multiview optimized AI models for medical imaging,” the study authors wrote.

Read the complete findings from this study here.