CHICAGO -- AI leads to improvements in thyroid ultrasound interpretation among inexperienced readers, according to research presented November 30 at RSNA 2025.

In her presentation, Min Young Kim from Ewha Womans University in Seoul, South Korea, presented results showing that while experienced readers did not see added benefit from AI assistance, inexperienced readers benefited from using AI in interpreting ultrasound images.

“AI assistance significantly improved the diagnostic performance of less experienced readers, effectively narrowing the gap in expertise,” Kim said.

Even with TI-RADS, interobserver agreement for interpreting thyroid ultrasound images is still fair to moderate. Prior research has shown AI’s potential to assist in ultrasound interpretation, especially for inexperienced readers.

Kim suggested that a weakly-supervised AI system can automatically detect lesions and predict malignancy without manual selection of regions of interest.

Kim and colleagues studied the effect of using AI-based decision support for interpreting thyroid ultrasound exams. They applied the AI support system to both experienced and inexperienced readers.

The study included 481 thyroid ultrasound images from 481 patients. It also included eight readers, including the following: four board-certified radiologists with three to 23 years of experience, an endocrine surgeon, a first-year radiology resident, a nurse, and a radiographer with less than one year of experience.

The team used a deep learning-based AI system (CadAI-T for Thyroid, BeamWorks) to help the readers. The ultrasound images were independently reviewed in two sessions. Session one included grayscale images alone. After three weeks of washout, session two included grayscale images with AI results. The team also asked the readers to describe whether they had confidence in the AI results for each case.

Of the total images, 184 nodules were malignant, 157 were benign, and 140 were negative images without focal abnormality.

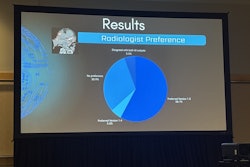

Inexperienced readers saw their AUCs increase between both sessions from 0.75 to 0.84 (p < 0.001). However, experienced readers saw no added benefit (0.9 vs. 0.91, p = 0.092). The inexperienced readers also significantly improved other performance metrics with AI assistance.

Kim also reported that both experienced and inexperienced readers reported having confidence in AI results when used for assessing malignant nodules.

Finally, the AI system achieved a high standalone performance. This included an AUC of 0.903 when using AI-based scoring and an AUC of 0.87 when using TI-RADS.

Kim said the results favor AI’s utility as an assistant for less experienced readers for differential diagnosis of thyroid nodules.

“For expert readers, AI may serve as a second-opinion tool to increase diagnostic confidence,” she said.

For full coverage of RSNA 2025, visit our RADCast.